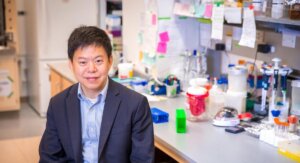

Xiang Ren, who has recently won several awards for his work in natural language processing. Photo/Haotian Mai.

What makes a good idea, good? Why do some innovations—like the touchscreen—thrive, while others fall into obscurity? Remember the minidisc, anyone?

Xiang Ren, an assistant professor in USC Viterbi’s Department of Computer Science, is working with social scientists at Stanford University on a new project that aims to discover why some concepts are adopted while others fade into the annals of time.

An expert in natural language processing, Ren is using knowledge extraction techniques to identify scientific concepts found in massive amounts of text data, collected since 1920, including research papers, books, patents, Wikipedia and popular science publications.

With these concepts in hand, the researchers will then look for patterns to see what successful innovations have in common and how their popularity waxes and wanes over time.

The two-year project, funded by the National Science Foundation, involves processing millions of documents spanning more than 100 disciplines to observe how new ideas and new concepts evolve. The goal is to use this knowledge to accelerate scientific discovery.

“No person can read through all this text,” said Ren, who holds a joint appointment at the USC Information Sciences Institute. “This is why we need some sort of automatic text processing techniques to organize and analyze this information.”

Ren recently received several awards to investigate this technique, known as information extraction—or translating a collection of texts into sets of facts—including an Amazon Research Award, a Google AI Faculty Research Award and a JP Morgan AI Research Award.

In April, he was named in the 2019 Forbes Asia 30 Under 30 list, featuring 300 entrepreneurs and young leaders who are contributing to their industries and making an impact in society.

“It is an honor to have my work recognized by such large and prestigious companies and organizations,” said Ren, who joined USC in 2017 after earning his Ph.D. from the University of Illinois, Urbana-Champaign.

“To me, information extraction is a fascinating and challenging puzzle to be solved. It is humbling to know that many other people appreciate and can benefit from this work.”

Turning Data into Knowledge

Turning data into knowledge is one of the biggest challenges in the big data age. It can be useful for a range of applications, from discovering the genes related to a specific disease, to detecting terrorist attack-related information on web forums.

But how can we wrangle, and make sense of, the gigantic amount of texts produced every day?

“If you took all the tweets written in just one day and turned them into books, the pile would be as high as the Empire State Building,” said Ren.

“If you took all the tweets written in just one day and turned them into books, the pile would be as high as the Empire State Building.” Xiang Ren.

Not only that, but now people are putting their thoughts into different types of text data. To find patterns, you need a system that can understand them all and then apply what it has “learned” to never-seen-before texts

“Text on social media looks different from a news article, and a news article will use different terms from an academic paper,” said Ren. “My method works on many kinds of text data, which is exactly what is needed for these kinds of knowledge discovery projects.”

A Tricky Challenge

Mining knowledge from unstructured data—text that is collected in a wide range of forms, from social media sites to academic papers—is a nevertheless tricky challenge, said Ren.

In earlier natural language processing techniques, the machine-learning model learns how to interpret text from being fed high volumes of human-annotated text data. For instance: NASA is an organization; an astronaut is a profession; Christina Koch is a person. But, in addition to being a laborious and expensive process, it turns out humans aren’t actually terribly good at annotating massive amounts of texts without making errors.

In fact, found Ren, machines do better without our help. So, he developed a technique to mine concepts from data without much human intervention at all. His Ph.D. thesis on this topic, titled Mining Entity and Relation Structures from Text: An Effort-Light Approach, received an ACM SIGKDD Doctoral Dissertation Award in 2018.

“Eventually, it could even automate some scientific discovery processes—reading millions of papers and extracting the salient information for scientists to analyze.” Xiang Ren.

The idea is this: Instead of using human annotations as training material, the algorithm automatically generates training examples by referencing pre-existing, human-curated knowledge bases such as Wikipedia. Then, it digs out language patterns from text based on the training examples and applies the same rules to any new texts encountered. In other words, it learns by looking for the semantic relationships, or meaning, between entities—for instance, people, places, activities or events.

“It seems pretty magical, right?” said Ren. “But basically, it learns from human prior knowledge. You don’t have to have a human annotate it, because you’re pulling from data that already exists. I skip the most expensive and time-consuming step in the machine learning pipeline, which is human annotation of labeled data.”

It’s closer, said Ren, to how humans perceive text – if you’re trying to understand context, you look at the surrounding words in the sentence.

“These patterns can help you find out new concepts in the data sets we have,” said Ren.

“News articles are emerging every day, academic papers are emerging every data. This is new data, but the patterns found in existing texts can be reused by the system to understand the text in the new data.”

Ren hopes his future work can expand on that. He’s also working to make the black-box machine learning models more accessible, so that domain experts can understand why certain words are extracted by the model to reach conclusions.

“Eventually, it could even automate some scientific discovery processes—reading millions of papers and extracting the salient information for scientists to analyze,” said Ren. “Automating this process saves money and it also allows us to analyze text data for patterns at a much larger scale, so your decision-making can be more informed. If we want to make data work for us as a society, this process is crucial.”

Published on April 22nd, 2019

Last updated on April 22nd, 2019