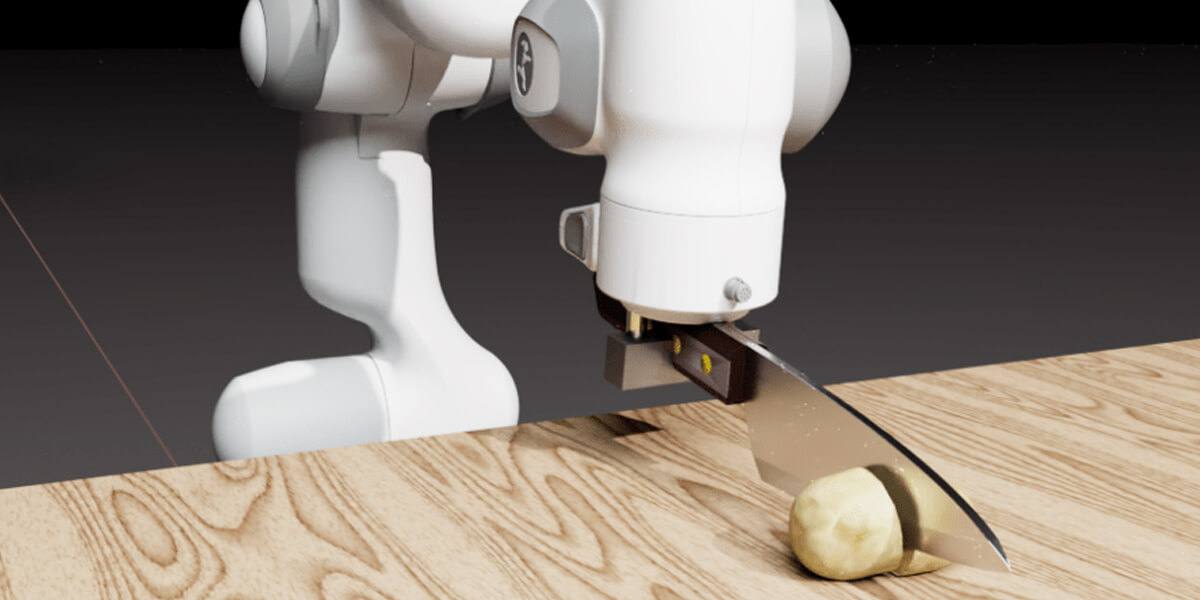

USC PhD student Eric Heiden, working with NVIDIA researchers, has created a new simulator for robotic cutting that can accurately reproduce the forces acting on the knife as it presses and slices through common foodstuffs. Image/Eric Heiden/NVIDIA.

Researchers from USC’s Department of Computer Science showcased cutting-edge research at the Robotic Science and Systems Conference (RSS) 2021, July 12-16, 2021. The annual conference, held virtually this year, brings together leading robotics researchers and students to explore real-word applications of robotics, AI and machine learning. From creating realistic simulations environments, to robot “job interviews” that catch errors before deployment, to cutting simulations that could improve robotic surgery, USC researchers are forging new paths in robotics.

Simulating realistic cutting

PhD student Eric Heiden and NVIDIA researchers unveiled a new simulator for robotic cutting that can accurately reproduce the forces acting on the knife as it presses and slices through common foodstuffs, such as fruit and vegetables. The system could also simulate cutting through human tissue, offering potential applications in surgical robotics. The paper received the Best Student Paper Award at RSS 2021.

The team devised a unique approach to simulate cutting by introducing springs between the two halves of the object being cut, represented by a mesh.

The team devised a unique approach to simulate cutting by introducing springs between the two halves of the object being cut, represented by a mesh. These springs are weakened over time in proportion to the force exerted by the knife on the mesh. In the past, researchers have had trouble creating intelligent robots that replicate cutting behavior.

“What makes ours a special kind of simulator is that it is ‘differentiable’ which means that it can help us automatically tune these simulation parameters from real-world measurements,” said Heiden. “That’s important because closing this reality gap is a significant challenge for roboticists today. Without this, robots may never break out of simulation into the real world.”

To transfer from simulation to reality, the simulator must be able to model a real system. In one of the experiments, the researchers used a dataset of force profiles from a physical robot to produce highly accurate predictions of how the knife would move in real life. In addition to applications in the food processing industry, where robots could take over dangerous tasks like repetitive cutting, the simulator could improve force haptic feedback accuracy in surgical robots, helping to guide surgeons and prevent injury.

“Here, it is important to have an accurate model of the cutting process and to be able to realistically reproduce the forces acting on the cutting tool as different kinds of tissue are being cut,” said Heiden. “With our approach, we are able to automatically tune our simulator to match such different types of material and achieve highly accurate simulations of the force profile.” In ongoing research, the team hopes to find energy efficient ways to apply cutting actions to real robots.

Making safer autonomous robotic systems

Ensuring autonomous system safety is one of today’s most complex and important technological challenges. Whether you’re dicing vegetables with a robot chef, driving to work in an autonomous car, or going under the knife with a surgical robot—there is no room for error.

But as robots become more complex and commonplace, it becomes harder to predict their behavior in every possible situation. Typically, robots that work with humans are tested in a lab with human subjects to see how they behave. But these experiments provide limited insights into the robot’s behavior when deployed long-term in messy real-world scenarios.

“By creating these scenario generation systems, I’m hoping we can trust robotic systems enough to make them part of our everyday home life.” Matt Fontaine.

What if, instead, you could run a huge number of simulations to identify potentially catastrophic errors in robotic systems before deployment?

In two papers accepted at RSS 2021, lead author Matt Fontaine, a PhD student, and his supervisor Stefanos Nikolaidis, an assistant professor in computer science, present a new framework to generate scenarios that automatically reveal undesirable robot behavior. Like a tough job interview, the tests put robots through their paces before they interact with humans in safety-critical settings.

The researchers used a class of algorithms named “quality diversity algorithms” to find a collection of diverse, relevant and challenging scenarios, such as how the scene is arranged, or how much workload is distributed between the human and the robot. Specifically, the team attempted to find failure cases that are unlikely to be observed when testing the system manually, but may happen when deployed.

“It’s very easy to break a robotic system in a way that is not the system’s fault,” said Fontaine, who worked as a simulation engineer at a self-driving car startup before joining USC. “The space of scenarios is flooded with failure cases that are not relevant, like drivers driving unreasonably or roads that would never occur in the real world. Our approach could help discover failures that are relevant.”

In addition to identifying errors that may not have shown up in industry-standard robotic tests, they also found that simulation environments can greatly affect a robot’s behavior in collaborative settings.

“This is an extremely important insight as we all arrange our houses differently,” said Fontaine. “By creating these scenario generation systems, I’m hoping we can trust robotic systems enough to make them part of our everyday home life.”

Creating realistic training environments

Imagine trying to teach a robot to cook in your kitchen. The robot learns through trial and error and needs careful supervision to make sure it does not knock over plates or leave the stove on. Instead, what if you could train a similar robot in a laboratory with the proper safety precautions, then apply what was learned to your robot at home? There’s one problem: the kitchen in your home and in the laboratory are not identical. For example, they might look different and have different cookware, so the robot has to adjust how it cooks in the new environment.

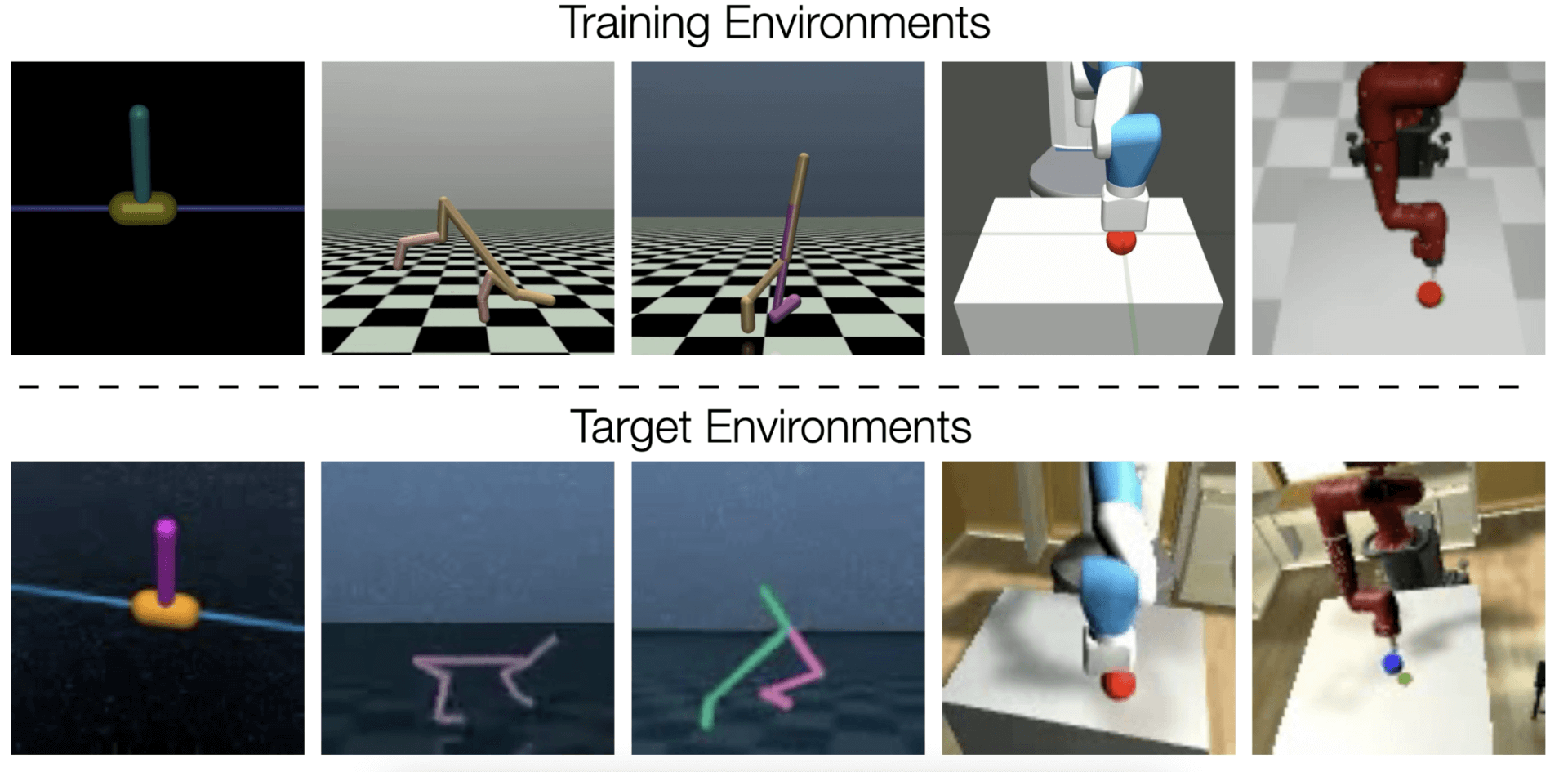

A new paper lead authored by Grace Zhang, a PhD student, with supervisor Joseph Lim, a computer science assistant professor, helps robots easily transfer learned behavior from one environment to another by virtually modifying the training environment so it is similar to the real-world environment.

“This way, we can train the robot in one environment, like the laboratory, then directly deploy the trained robot in another, like a home kitchen,” said Zhang.

Transferring a behavior from the training environment (top row) to the target environment (bottom row) for five different tasks.

Unlike previous studies, which tackle only visual or physical differences—for instance, differences in kitchen interior or cookware weight—this new technique creates a more realistic scenario by tackling both differences at the same time.

“We can train the robot in one environment, like the laboratory, then directly deploy the trained robot in another.” Grace Zhang.

The researchers hope these insights could make robots more efficient and practical in other real-life situations, such as search and rescue missions. By fitting in between the rubble to searching in hard-to-reach places, ground robots can aid a human rescue team by quickly surveying large regions or going into dangerous areas. Using this new approach, the robot could gather data onsite, then do additional training in a simulator to adapt the robot to specific terrain.

“While we are still a long way from deploying automated robots in these critical situations, having a way to transfer behaviors from controllable training environments may be a first step,” said Zhang.

Robots planning motions to achieve goals

Much like humans must plan their actions to achieve any task—from brushing your teeth to making a cup of coffee—motion planning in robotics deals with finding a path to move a robot from its current position to a target position without colliding with any obstacles. In the automation industry—from robotic chefs to warehouse and space robots—the order of motion is critical to the success of the overall goal.

The robot is able to complete both a simple pick and place task, and a more complex pick and pour task.

The ability to move through various motions quickly and accurately is especially important for robots that operate in environments like the home, which are not carefully controlled in structure. In addition, many robotic tasks require constrained motions, such as maintaining contact with a handle, or keeping a cup or bottle upright while transporting.

At USC, Peter Englert, a postdoctoral researcher, is working on giving robots the ability to reason and plan about motions over a long period of time. Englert is supervised by Gaurav Sukhatme, the Fletcher Jones Foundation Endowed Chair and a professor of computer science and electrical and computer engineering,

“Our method … intelligently plans how to perform the individual subtasks so that the robot succeeds at the overall task.” Peter Englert

In a new paper presented at RSS 2021, Englert, Sukhatme and their co-authors achieve this by dividing a motion into multiple smaller motions that are described as geometric constraints regarding the robot’s position in its environment. The focus: finding when to switch from one motion to the next. Many manipulation tasks consist of a sequence of subtasks where each subtask can be solved in multiple ways. Depending on the specific solution of a subtask, it might affect the outcome of future subtasks.

A running example in this paper is the task of using a robot arm to transport a mug from one table to another while keeping the orientation of the mug upright, a task involving multiple phases and constraints.

“A cup can be grasped in many different ways,” said Englert. “However, for certain grasps the robot might not be able to perform a subsequent pouring motion. Our method avoids such situations and intelligently plans how to perform the individual subtasks so that the robot succeeds at the overall task.” Co-author Isabel M. Rayas Fernández, a computer science PhD student, presented the work in a “Spotlight Talk.”

Published on July 16th, 2021

Last updated on May 17th, 2022