Photo Credit: smartboy10/Getty Images

In 68 CE, the Roman emperor Nero committed suicide. “Or did he?” asked the ancient conspiracy theorists who were convinced Nero faked his death and was secretly planning a return to power.

Conspiracy theories – explanations for events that involve secret plots by sinister and influential groups, often for political gain – are not a new human phenomenon. What is new, however, is the way they spread. In Nero’s day, word of mouth could only get a conspiracy theory so far. Today, social media platforms can give conspiracy theories a far greater reach.

Research scientist Luca Luceri and a team from USC Information Sciences Institute (ISI), a research institute of the USC Viterbi School of Engineering, set out to better understand how conspiracy theories spread on social media, and what can be done to stop them. Specifically, they looked at how Twitter users are radicalized within the QAnon conspiracy theory.

Their resulting paper, Identifying and Characterizing Behavioral Classes of Radicalization within the QAnon Conspiracy on Twitter, has been accepted in 2023 International AAAI Conference on Web and Social Media (ICWSM).

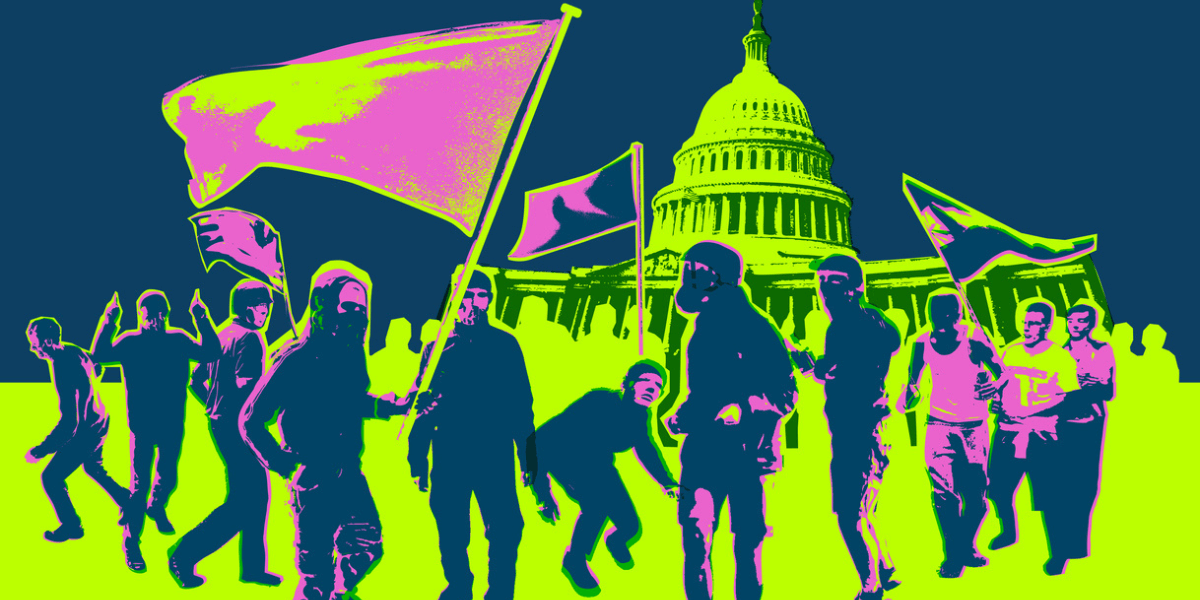

Online Tweets Can Become IRL Actions

“The motivation for looking at QAnon,” said Luceri, “was that it’s a recent, high-profile example of how dangerous a conspiracy theory that originates online and migrates into real life can be. Its supporters were among various online promoters of the attack on the Capitol.”

Why did the team choose to look at Twitter? Luceri explained, “That’s where the story migrates to mainstream media. The moment these stories get traction online in mainstream media is the moment when they might have an impact on real life.”

The team used a dataset of election-related tweets collected using Twitter’s streaming API service. They observed over 240 million tweets from an 11-week period before the 2020 U.S. election. This dataset included original tweets, replies, retweets, and quote retweets shared by over 10 million unique users.

Moderating Q

In order to keep conspiracy theories like QAnon from spreading online, Twitter enacted moderation strategies leading up to the 2020 U.S. Presidential election.

Luceri said, “The Twitter policies were meant to purge Twittersphere of QAnon content. The interventions were quite effective in removing or suspending users that were generating a lot of original content. But we found evidence that QAnon content was still there, and in particular, less evident radicalized behaviors could escape this moderation intervention.”

So, the ISI team set out to find new methods for modeling radicalization on Twitter.

More Than Just Keywords

There is a body of prior research about QAnon radicalization that uses keywords – words that are frequently associated with the conspiracy theory – to identify its supporters.

The ISI team hypothesized that, while tweeted keywords were a strong signal of QAnon support, they had to look at both “content-based” and “network-based” signals.

The first two signals the team studied were user profiles and tweets, based on keywords and web sources.

Luceri explained, “With QAnon, we have a lot of information about not only the keywords supporters use, but also the web sources and media outlets that they tend to share in their tweets. Some users clearly disclosed and declared their support for QAnon in their profiles or their Twitter account descriptions. And sometimes they pointed to QAnon-related websites, which is another strong indicator.”

Reading Between the Retweets

The ISI team then went beyond content, and looked at “community-based” signals in the dataset.

A psychology theory known as the “3N Model” has been used to describe radicalization of people in the real world. The team wanted to see if it played out the same way online. Luceri explained, “The 3N model says that people in radicalized groups echo extreme ideologies while they isolate individuals with opposing ideas. So, we wanted to study and see if this could be verified on Twitter.”

By looking at tweets, retweets, follows, and mechanisms such as “I follow back,” the team found strong evidence of the 3N theory in the QAnon Twitter community.

Additionally, they looked at “lexical similarity” (think: slang, abbreviations, word pairings, punctuation and emojis), to quantify how lexically similar tweets within the QAnon community were.

What’s Next?

The researchers showed that radicalization processes should be modeled across multiple dimensions; one single dimension – simply looking at keywords, for example – cannot capture the complex facets of radicalization.

And because the methods used for this research were not specific to Twitter or QAnon, Luceri said, “we plan to expand this study to other scenarios and platforms, including niche platforms where conspiracy theories originate and are reinforced before moving to mainstream media. We are also considering other platforms, like TikTok, YouTube, and Mastodon.”

Luceri will be presenting Identifying and Characterizing Behavioral Classes of Radicalization within the QAnon Conspiracy on Twitter in the 2023 International AAAI Conference on Web and Social Media (ICWSM), June 5 – 8, 2023 in Limassol, Cyprus.

This will be the 17th annual ICWSM, one of the premier conferences for computational social science and cutting-edge research related to online social media. The conference is held in association with the Association for the Advancement of Artificial Intelligence (AAAI).

Published on June 5th, 2023

Last updated on May 16th, 2024