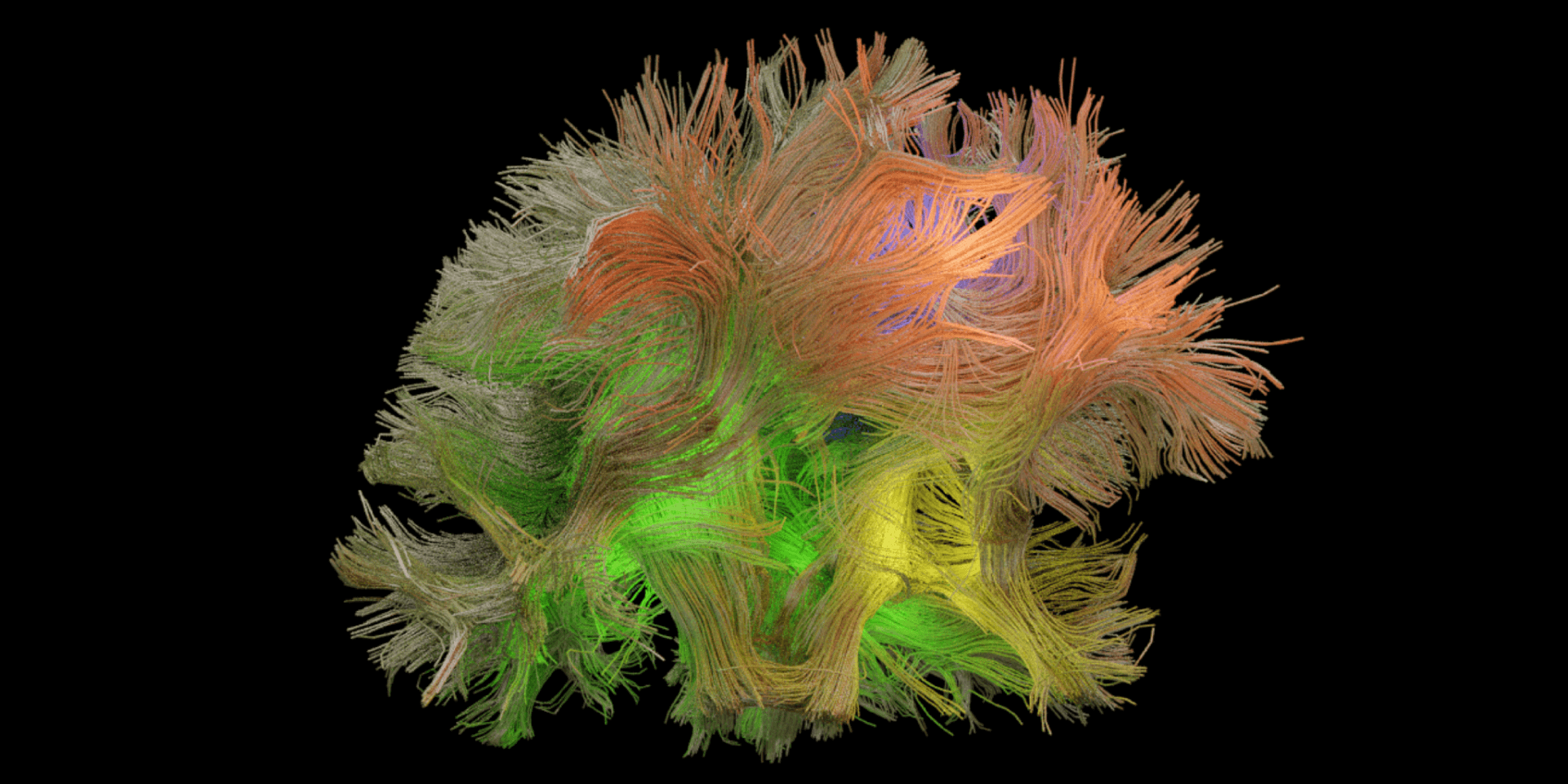

Professor Maryam Shanechi’s research could lead to innovative new treatments for a host of disorders. (PHOTO CREDIT: Omid Sani/Maryam Shanechi)

When reaching for a cup of coffee or catching or throwing a ball, our brain manages to coordinate the movement of no less than 27 joint angles in our arms and fingers. Exactly how the brain is able to do this is a topic of much debate among researchers.

Now, led by Maryam Shanechi, USC Viterbi assistant professor of electrical and computer engineering and Andrew and Erna Viterbi Early Career Chair, researchers discovered a signature dynamic brain pattern that predicts naturalistic reach and grasp movements. The discovery, which is now published in Nature Communications, could become a catalyst for the development of better brain-machine interfaces and improving treatment for paralyzed patients.

In this study, the goal was to compare both the small and large spatiotemporal scales of brain activity. Small-scale activity refers to the spiking of individual neurons or brain cells; large-scale activity refers to Local Field Potential (LFP) brain waves that instead measure the aggregate activity of thousands of interacting individual neurons. Both may contribute to performing reach and grasp movements, but how?

To answer this question, Shanechi and Hamidreza Abbaspourazad, a Ph.D. student in electrical engineering, created a new machine-learning algorithm to extract dynamic neural patterns that co-exist in spiking and LFP activity at the same time and to identify how these patterns relate to each other and to movements. The study was done in collaboration with Bijan Pesaran, professor of neural science at NYU, who performed experiments to collect spiking and LFP brain activity during naturalistic reach and grasp movements using neurophysiology techniques in the field.

By applying the new algorithm to the collected data, they identified commonalities and differences between spiking and LFP activities. From there, they were able to ultimately discover a common pattern between them that was highly predictive of movements.

“When looking closer, we discovered that this common multiscale pattern actually happened to dominantly predict movement compared to all other existing patterns,” Shanechi said. In other words, the team identified a previously undetected pattern of brain activity associated with reach and grasp movements which provides a possible neural signature for them.

Shanechi, who recently received the NIH Director’s New Innovator Award and the ASEE Curtis W. McGraw Research Award, focuses on neurotechnology research; she studies the brain through modeling, decoding, and control of neural dynamics. This publication is just one of many recent projects Shanechi has led to better understand complex neural patterns and neural dysfunctions to develop therapies relating to both physical and mental disabilities. In fact, she has been on a bit of a streak lately, with multiple major Nature publications in the last few months.

“Interestingly,” Shanechi explains, “we found that this neural signature pattern was not only shared between spiking and LFP signals, but also between our different subjects who were making movements.”

This means that the shared pattern can help researchers understand how an individual’s brain controls reach and grasp movements. More importantly, it also suggests that different people may have a similar neural signature when making reach and grasp movements.

Of course, understanding what the brain is doing is only half the battle. Translating brain activity into action is another thing altogether. But Shanechi’s model can do just that. She and her team are able to translate brain activity into movement.

Abbaspourazad adds, “Our model not only discovers the signature patterns in neural activity but also predicts arm and finger movements quite accurately from these patterns.” This is especially promising in the development of brain-machine interfaces to restore movement in paralyzed patients.

In addition to helping paralyzed patients, Shanechi hopes this research can also help better understand the neural mechanisms of movement disorders like Parkinson’s disease to guide future therapies.

“We are hoping that our better understanding of how the brain generates our everyday movements could help us design better brain-machine interfaces that can help millions of disabled patients with neurological injuries and diseases.”

Published on January 27th, 2021

Last updated on April 23rd, 2025