Photo/Catherine Shu

Xiang Ren, the Andrew and Erna Viterbi Early Career Chair and Associate Professor of Computer Science, has been honored as an MIT Technology Review Innovators Under 35 for the Asia-Pacific Region for his groundbreaking work in natural language processing, a subfield of computer science aimed at giving computers the ability to interpret and speak human languages.

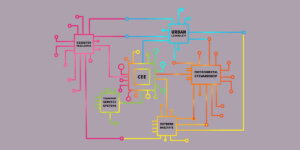

By creating novel learning algorithms and evaluation methods, Ren – who also holds an appointment as a research team leader in the Information Sciences Institute and serves as a member of the USC NLP Group, USC Machine Learning Center and ISI Center on Knowledge Graphs – wants to endow NLP systems with robust common sense, enabling large language models like ChatGPT to better communicate with humans. In March 2024, he received the ACM International Conference on Web Search and Data Mining (WSDM) Test of Time Paper Award for his paper titled “Personalized entity recommendation: a heterogeneous information network approach.” The paper, Ren’s first publication during his PhD career, opened up the possibility of building “personalized” recommender systems for shopping or social media.

Ren recently spoke with USC Viterbi about his research and its implications. The interview has been edited for style and clarity.

What was your reaction to winning the MIT Technology Review Innovators Under 35 Award?

I was very excited and honored because this is one of the most prestigious awards given to researchers and scientists in the field. It’s a humongous recognition for what I’ve done during my years at USC.

What is NLP?

I’ll start with the K-12 version. NLP is a branch of computer science through which computers or AIs are given the ability to communicate with humans. One way to facilitate communication is to understand what humans are saying about their own experiences, the knowledge they have, etc. Another direction is that the computer must produce human-like responses or conversations to simplistically communicate with humans. In an ideal world, we want a computer to be able to communicate like another human. We want the computer to make you feel comfortable. On the more engineering side of things, NLP can be perceived as building large-scale neural language models that are able to learn from massive amounts of human language data that is grabbed from the web and sources like Wikipedia articles and social media. Technically speaking, this is a statistical model that compresses lots of language information and is able to compress this knowledge into coherent speech.

How do you implement “common sense” into NLP systems? What are the challenges of doing so?

Typically, there are two ways of injecting common sense into these NLP AI systems. One is in a more human-like way. I present you with statements of common-sense knowledge on situations you are handling. For example, if your task is to make a pancake, then there’s going to be a step where you should find milk. The common sense in this situation will have you look into your kitchen for milk, rather than your bedroom. Through this information, if we just speak it out in natural language form, the NLP will be able to execute tasks. The second way is more implicit. We let the language model read through large amounts of common-sense situations written in different texts, and these models might implicitly summarize these things. Through scouring large amounts of sources, these models will discover that milk, for example, is likely found in the fridge. The biggest challenge in implementing common sense into NLPs are reporting biases. Most of us think common sense is common so we don’t talk about it. So, if you look through news articles or social media, they don’t explicitly write down common sense information. Therefore, knowledge has to be induced through large amounts of data.

Why are advances in NLP important?

The ultimate goal of people building NLP systems is to achieve artificial general intelligence, which means that the system is able to match or surpass humans in accomplishing things or doing tasks. We have actually made immense progress in recent years. Take for example ChatGPT, which is able to translate speech, summarize stories, and create images better than a large majority of the human population. If you think about the most fascinating capabilities of humans, language and an understanding of language is a part of that. Language has to be included in AI if it’s to match humans. So that means the NLP problem, which consists of understanding the languages and being able to generate texts, is a key capability that we need to improve. Human language is very nuanced and complicated, and implementing it into AI is very challenging. NLP does not test you on simple grammar and vocabulary. It tests you on situational context, an understanding of the other speaker, whether or not two speakers can find a common ground through which they can execute a task. Indeed, it’s not just a computational problem, it’s linguistic, psychological, and even philosophical.

What sparked your interest in NLP and computer science?

This would date back to my junior year in college. I was very into mathematics and physics before knowing too much about computer science, not to mention AI. What’s fascinating to me about computer science is that many things can be deduced based on rigorous math. When you write a program, the program executes in its expected way. It’s a very controllable subject. This makes me feel very excited because you’re able to create a program that mirrors an image or an idea in your mind. You can make it so complex that it achieves more and more, but ultimately, it’s us as humans who are creating such powerful models. I was lucky enough to be introduced to AI at the end of my undergraduate experience. AI fascinates me because it deals with computer science, but also lots of other subjects like cognitive science, psychology, and many other disciplines. The fact that AI might one day be able to assist and collaborate with humans to expand our understanding of the world is thrilling.

Where do you see the future of NLP and AI?

I think there’s two broader directions that I see NLP and AI going. Number one is this idea of a knowledge transfer. AI is able to read the entire web and news articles published in a day. It becomes a very comprehensive knowledge base. It also is supposed, and this has been the source of many recent debates, to be averse to opinions. There’s a huge potential for AI to broadcast and disseminate information and educate people who have limited access to resources. Number two, and this is a scarier one, it’s going to replace some percentage of people in business processes. For example, AI is likely going to replace some parts of customer services and sales jobs. There’s pros and cons to this. The pros is that it makes businesses more efficient and lightweight. The bad thing of course is that many will lose jobs and job opportunities because of AI’s. The big question here is privacy and ownership of your knowledge. When you are using ChatGPT, you are feeding it information, sometimes your own thoughts. We do this for free in exchange for using ChatGPT for free. At the same time, many don’t realize that we are sacrificing our own knowledge to strengthen AI. When this AI becomes so strong that it is able to replace jobs, you still won’t have any ownership over your contribution to the AI’s strength. We have to give people ownership to the data we give AI, so that it can reward individuals who contribute to its efficiency.

Published on February 7th, 2024

Last updated on March 7th, 2024