Illustration by Alya Rehman, Text by Tiana Lowe

Few rites of passage in the adolescent’s academic life receive as much universal vitriol as the SAT. Criticisms range from teachers’ concerns that our society’s obsession with standardized testing encourages reductive “teaching to the test.” Students lament the hours and hundreds ⎯ or sometimes even thousands ⎯ of dollars invested into improving their test scores by a mere margin of points. However, no portion of the SAT stirs quite as much controversy as the written essay, which prior to this year, counted for around 30 percent of the total SAT score.

Essays for the SAT and the GMAT are scored by two human graders, whose scores are averaged out if within a one-point difference out of a total of six. If the difference exceeds one point, then a final, extra grader is brought to score the essay.

“Essay grading has been seen by many as a holistic, inextricable human process: slow, but reliable. However, automatic grading creates the opportunity to accelerate learning by enabling students to self-test and gain access to writing feedback at a faster pace than that supported by a regular teaching environment,” said Daniel Marcu, director of strategic initiatives at USC Viterbi’s Information Sciences Institute and research associate professor in the Department of Computer Science.

Recognizing this opportunity, ETS – a billion-dollar non-profit with a monopoly over many aspects of the testing industry, including college and graduate school entrance exams – enlisted Marcu, an expert in natural language processing, over a decade ago to help develop an algorithm to do the seemingly impossible: grade essays.

Marcu developed discourse understanding algorithms that have become part of an ETS product called e-rater, which was subsequently used to grade millions of essays a year in online training environments developed by ETS. Marcu implemented machine learning models that understand the mechanics and rhetoric of well written texts.

“As the test taking process becomes increasingly based on technology, the production costs go down, making success more accessible to all.”

-Daniel Marcu

Given the success of that collaboration, ETS has recently provided a research gift to Marcu. Marcu is using the gift to develop algorithms that can automatically grade content-heavy questions, such as those found in the Common Core curriculum.

In the nearly two decades between developing these two algorithms, Marcu’s accomplishments have included founding, growing, and selling Language Weaver, a company that brought the first statistical machine translation software to market, years ahead of Google and Microsoft, and, more recently, founding Fair Trade Translation, an online platform that enables and promotes the implementation of fair trade principles in the language services market.

God in the machine

While ETS’s e-rater has become a revolutionary industry standard, not all were initially convinced that the prospects of computer-graded essays were positive.

“There was a quite a bit of resistance for introducing technology which can help or even replace humans, but in the end, we were able to develop and algorithm which could take apart a text and score not just the discourse mechanics, but also the strength of an argument,” said Marcu.

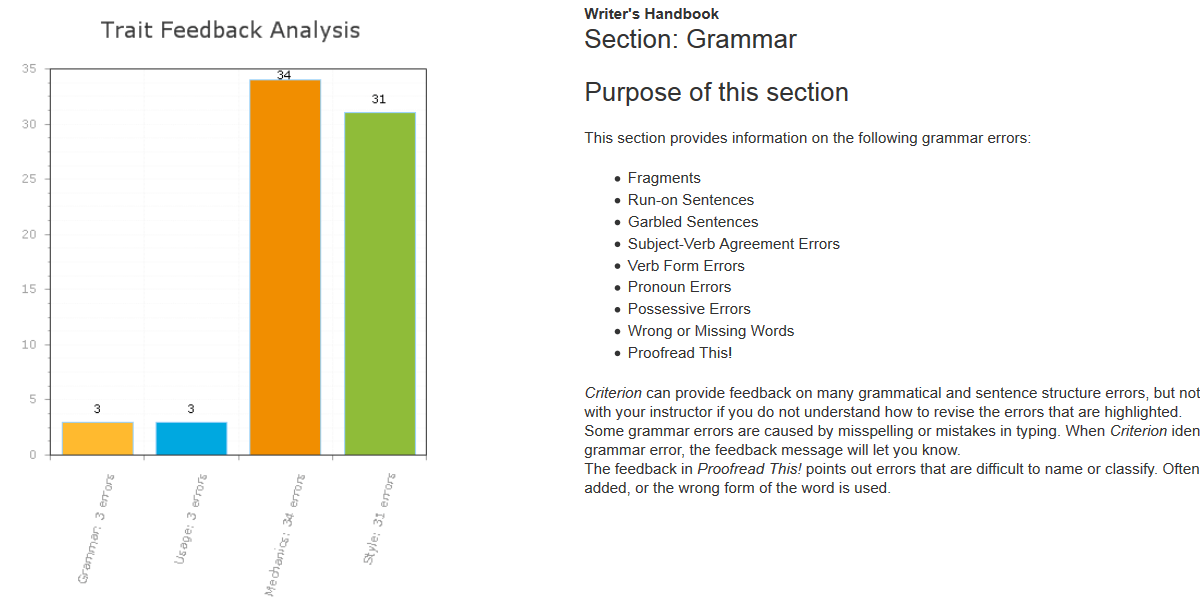

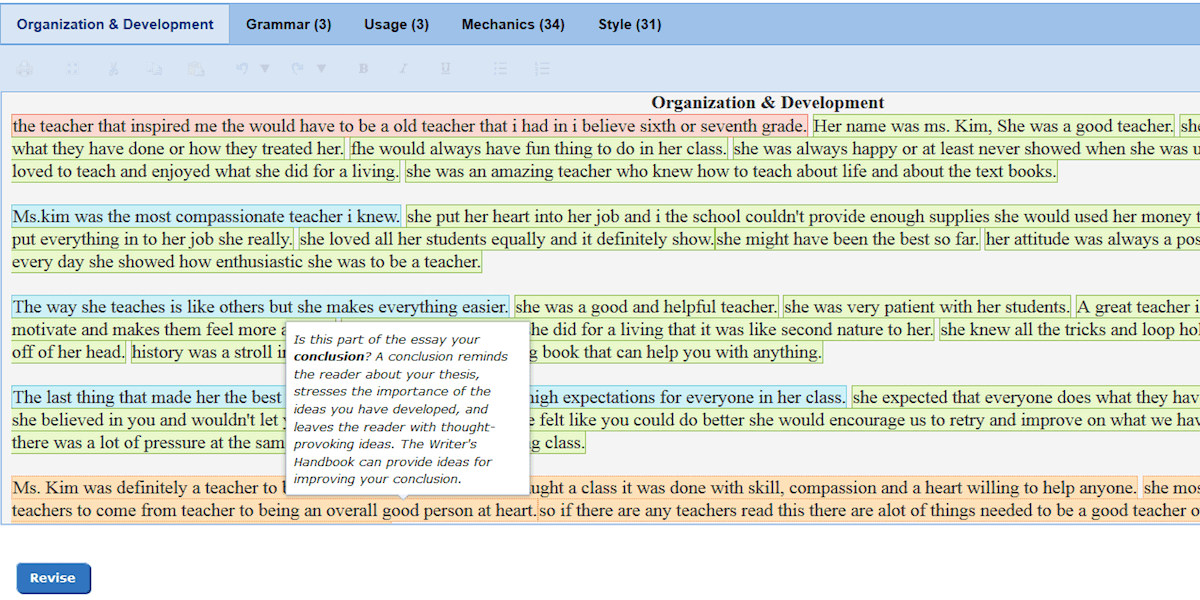

An example of the feedback students receive from the e-rater. Photos courtesy of ETS

An example of the feedback students receive from the e-rater. Photos courtesy of ETS

While the objection to a rubric or algorithm may question the existential value of the standardized test as a whole, there is undoubtedly one aspect of the e-rater that has improved educational prospects across the board.

“If a computer is capable of grading discourse on top of grammar and vocabulary, then it could also give feedback,” said Marcu. ETS now offers its Criterion Online Writing Evaluation Service software for teachers and students alike to use.

Daniel Marcu. Photo/ ISI

“Some students can afford to hire tutors, but now all students have access to a relatively cheap software in their homes,” said Marcu. “As the test taking process becomes increasingly based on technology, the production costs go down, making success more accessible to all.”

Given that Kaplan and the Princeton Review’s “best value” SAT tutoring programs start at $999 and $1,999 respectively, Marcu’s e-rater comes as a much needed market alternative. School districts across the country offer the software for students to use as a highly effective substitute.

Now the question arises of whether Marcu can replicate these benefits for K-12 students across the country with a Common Core-specific e-rater, referred to as Short Answer Assessment.

“The SAT and GMAT essay writing assessments have historically been based on judging a student’s ability to produce good arguments. Now with writing for the Common Core, it’s not about general argumentation alone; it’s about understanding specific texts and images and producing answers that are deeply grounded into the content and semantics of these texts and images,” said Marcu.

“In the long run, it would be wonderful if we could track students’ progress over time by measuring their ability to produce qualitative answers that cannot be boiled down to a simple choice between alternatives. We’re not there yet but I think it’s a worthy vision.”

Published on October 25th, 2016

Last updated on May 16th, 2024